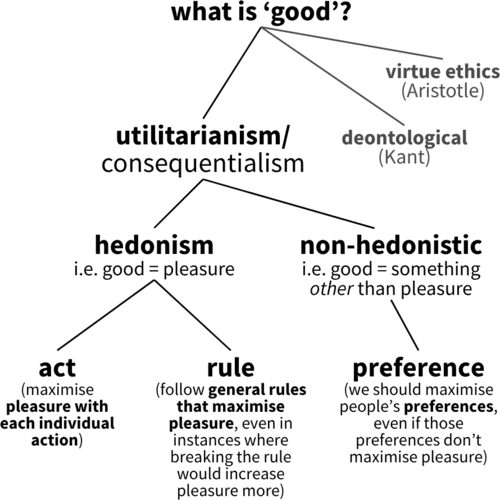

Utilitarianism is a consequentialist ethical framework. This means it says what makes things good or bad/right or wrong, are the consequences. These consequences are usually pleasures and pains (pleasure = good, pain = bad) but there are other, non-hedonistic, forms of utilitarianism which say the relevant consequences for morality are something else.

Utilitarian ideas can be traced back at least as far as the ancient Greeks. In the 4th Century BC, Epicurus suggested that pleasure is the highest good and pain the greatest evil. In the 18th century, utilitarianism was developed into a formal ethical theory by Jeremy Bentham. Then, in the 19th century, John Stuart Mill developed Bentham’s ideas by distinguishing between higher and lower pleasures and laying the groundwork for what would later be termed rule utilitarianism.

This post covers various forms of utilitarianism and contrasts them against various issues and objections. If you’re looking for a specific form of utilitarianism or objection you can jump straight to it using the links below:

- Versions of utilitarianism:

- Hedonistic utilitarianism:

- Non-hedonistic utilitarianism:

- Issues for utilitarianism:

Hedonistic utilitarianism

“Ethics at large may be defined as the art of directing men’s actions to the production of the greatest possible quantity of happiness for those whose interests are in view”

– Jeremy Bentham, Principles of Morals and Legislation

Hedonistic forms of utilitarianism say the relevant consequences for morality are pleasure/happiness and pain/unhappiness:

- Good = happy

- Bad = unhappy

In other words, actions that increase happiness are good and actions that decrease happiness or increase pain are bad. And this has intuitive appeal: You don’t really need to explain why hurting someone is bad or why making someone happy is good.

But within hedonistic utilitarianism, there is disagreement regarding the level at which pleasures and pains should be considered:

- At the level of each individual action (act utilitarianism)

- At the level of general rules (rule utilitarianism).

Act utilitarianism

Act utilitarianism (i.e. hedonistic act utilitarianism) says we should consider the consequences of each possible action we could take, and then choose the action that maximises pleasure or happiness.

For example, if a homeless person asks you for $10, and that $10 would bring him greater pleasure/happiness than it would you, you should give him the $10 – because giving him the $10 results in more pleasure/happiness. There’s no rules or principles beyond pleasure for (hedonistic) act utilitarianism. And so, if stealing something from a shop would make you happier than it would make the shopkeeper unhappy to be stolen from, then you should steal from the shop – stealing is the right thing to do. There’s nothing significant about it being an act of stealing, all that matters is the pleasures and pains that result.

So, hedonistic act utilitarianism says each time we act we should choose the action that maximises pleasure/happiness.

Act utilitarianism is often referred to as quantitative utilitarianism because it focuses on measuring happiness numerically. The idea is to total up all the pleasure an action would produce, subtract all the pain it would cause, and then choose the action with the best overall result.

Jeremy Bentham’s felicific calculus (also known as the utility calculus) is his proposed method for making this kind of calculation. It includes 7 factors to consider:

“To a person (considered by himself) the value of a pleasure or pain (considered by itself) will be greater or less according to:

(1) its intensity.

(2) its duration.

(3) its certainty or uncertainty.

(4) its nearness or remoteness.

… But when the value of a pleasure or pain is considered for the purpose of estimating the tendency of an act by which it is produced, two other circumstances must be taken into the account:

(5) its fecundity, i.e. its chance of being followed by sensations of the same kind (pleasure by pleasure, pain by pain), and

(6) its purity, i.e. its chance of not being followed by sensations of the opposite kind (pleasure by pain, pain by pleasure).

… For many people the value of a pleasure or a pain will be greater or less according to seven circumstances — the six preceding ones and and one other, namely

(7) its extent, i.e. the number of persons to whom it extends or (in other words) who are affected by it.”– Jeremy Bentham, Principles of Morals and Legislation

For example, if two possible actions would produce pleasures of different intensities, the morally right choice would be the one that brings about the greater pleasure.

In theory, the felicific calculus offers a way to measure overall happiness: sum up all the pleasures and subtract all the pains. Act utilitarians hold that the ethically best action is the one that produces the highest total happiness.

Problem: Difficulties with calculation

An issue for act utilitarianism and Bentham’s utility calculus (above), however, is that it is very difficult to follow in practice.

Firstly, it would mean you have to predict the future. But how are you supposed to do that? And how far ahead should you look? Saving a baby’s life might increase happiness in the short term, but what if that baby grows up to be a serial killer? In that case, saving the baby might have actually decreased overall happiness.

Secondly, you’d have to be able to measure and quantify each of the 7 variables for every possible outcome. But how exactly are you supposed to measure intensity of pleasure? Are we meant to hook everyone up to brain scanners? And how do you decide between a more intense but less certain pleasure versus a less intense but more certain one?

Thirdly, which beings should we include in the calculation? If the ability to feel pleasure and pain is the criterion, then cats, dogs, frogs, spiders, etc. all count. But should their pleasures and pains be treated as equal to human pleasures and pains? And if not, how do we compare e.g. a dog’s pleasure with a human’s?

So, to apply Bentham’s utility calculus strictly, you would need to:

- Predict the consequences of your actions for every sentient being, potentially far into the future.

- Measure all 7 variables for each being.

- Work out how to weigh human vs. non-human experiences of pleasure and pain.

- And do this calculation every single time you act.

This is clearly massively impractical – if not completely impossible – which suggests that utilitarianism is impossible to follow in practice.

Potential response:

“It is not to be expected that this process should be strictly pursued previously to every moral judgment, or to every legislative or judicial operation. It may, however, be always kept in view.”

– Jeremy Bentham, Principles of Morals and Legislation

Bentham’s point in the quote above is that the utility calculus should not be understood as a rigid step-by-step formula to be precisely applied before every moral decision. Instead, it is more like a compass: a guiding principle that always directs us toward the general goal of maximising happiness and minimising suffering, even if we don’t literally calculate every factor each time. In this way, Bentham distinguishes between the calculus as an ideal standard of rational assessment and its practical application, where what matters is keeping the general goal of maximising utility ‘in view’ rather than slavishly performing the exact calculations.

Potential response 2:

Rule utilitarianism (below) could simplify this calculation process. Instead of having to calculate utility every single time you do anything, rule utilitarianism allows for a one-and-done approach. For example, if we calculate that the rule “don’t steal” leads to greater happiness than not, then you just have to follow this simple rule. It might take a bit of work initially to decide which rules maximise happiness, but once these general rules are decided you simply have to follow them.

Problem: Tyranny of the majority

Act utilitarianism is all about maximising happiness – “the greatest good for the greatest number”. But what if the greatest number gets pleasure from something that causes pain to a smaller number – a minority? This is the problem of the tyranny of the majority.

For example, imagine you gathered together all the sadistic people of the world who would enjoy seeing an innocent person tortured:

- Let’s say there’s 10,000 such sadistic people

- And let’s say each of these people would each get 10 units of pleasure from seeing an innocent person being tortured

- So torturing an innocent person for the pleasure of these 10,000 people would result in 100,000 units of pleasure (10,000 * 10)

- And let’s say there’s an innocent person who no one really cares about who would suffer 10,000 units of pain from being tortured.

According to act utilitarianism, what’s morally right is to torture this innocent person for the pleasure of the 10,000 sadists (because 100,000 units of pleasure of the majority outweighs the 10,000 units of pain suffered by the minority).

If we’re optimising for the greatest good of the greatest number, torturing this innocent person is morally good because it maximises pleasure. But this seems wrong: Some things are just wrong (like torturing innocent people), regardless of the consequences. So this suggests that act utilitarianism is not the correct moral theory.

Potential response:

Rule utilitarianism (below) could argue that, although there might be occasional instances where torturing innocent people leads to greater happiness, as a general rule torturing innocent people leads to greater pain than pleasure. As such, rule utilitarianism is a potential way to avoid the tyranny of the majority problem facing act utilitarianism.

Rule utilitarianism

“though the consequences in the particular case might be beneficial- it would be unworthy of an intelligent agent not to be consciously aware that the action is of a class which, if practised generally, would be generally injurious, and that this is the ground of the obligation to abstain from it.”

– John Stuart Mill, Utilitarianism

Where act utilitarianism says we should calculate utility and pleasure at the level of each specific action, rule utilitarianism says we should calculate utility and pleasure at the level of general rules.

For example, let’s say someone is weighing up whether it is morally acceptable to steal from a shop:

- Act utilitarianism might say to do it: If the thief would get more pleasure from stealing the item than the shopkeeper would suffer pain (e.g. the thief is poor and the shopkeeper is rich), then it is morally acceptable to steal.

- But rule utilitarianism might say no: Even though stealing in this particular instance might increase happiness, as a general rule stealing causes more pain than pleasure.

One way the rule utilitarian might argue against stealing is that the consequences of an action are more than the sum of the parts. Not only might there the actual pains of stealing outweigh the pains, there’s also second-order effects. For example, if you knew that you could potentially be stolen from at any moment, so long as the thief’s happiness outweighed yours, then people would have a constant background level of anxiety about getting their stuff robbed. As such, the rule utilitarian might justify property rights by appealing to the general positive consequences that result from such rights.

Potential response:

Strong rule utilitarianism says we should follow moral rules strictly, even in cases where breaking them would lead to greater happiness. But this can lead to ‘rule worship’ – sticking to the rule for its own sake and losing sight of utilitarianism’s ultimate goal of maximising happiness. For example, if the rule “don’t steal” generally increases happiness, then under strong rule utilitarianism you couldn’t steal even if you were starving and poor and stealing was the only way to save your family’s life.

But if we adopt weak rule utilitarianism – which says we should follow the rules unless breaking them would produce more happiness – this seems to collapse back into act utilitarianism: If we can break a rule whenever the consequences justify it, the rules lose their force and we’re back to the same problems – like the tyranny of the majority.

Problem: The utility monster

The utility monster is similar to the tyranny of the majority objection (above) except that, instead of a large number of people getting a small amount of pleasure, a single being – ‘the utility monster‘ – gets a large amount of pleasure.

Imagine a being who experiences pleasure far more intensely than anyone else – so much so that the happiness gained by giving resources to this ‘monster’ outweighs the combined happiness of many others. According to utilitarianism and the felicific calculus, it would then be right to sacrifice the wellbeing of many to satisfy the utility monster’s immense pleasure.

This thought experiment exposes a potential flaw in utilitarianism: it seems to justify extreme inequality and exploitation if this inequality leads to greater total happiness, which many see as intuitively unfair and morally wrong.

Problem: The repugnant conclusion

The repugnant conclusion, first formulated by Derek Parfit, is kind of the opposite of the utility monster (above). Where the utility monster is a single being that experiences an enormous amount of pleasure, the repugnant conclusion describes a world with a huge number of people, but where each person only experiences a very small amount of pleasure – lives just barely worth living – that collectively outweigh the value of a smaller population living extremely happy and fulfilling lives.

The argument shows that if utilitarianism measures the goodness of outcomes solely by the total amount of happiness or well-being, then a very large population living lives that are just barely worth living would be judged better than a smaller population in which everyone enjoys extremely high levels of happiness. This is because the sheer quantity of small positive experiences, when aggregated across enough people, would outweigh the more intense happiness of fewer individuals.

But this conclusion seems counterintuitive or ‘repugnant’ because it seems to imply that a world of vast mediocrity is better than a world with fewer people but where those people are flourishing and living great and fulfilling lives. As such, the repugnant conclusion challenges the plausibility of the felicific calculus and utilitarianism’s aggregation principle of “the greatest good for the greatest number”.

Problem: Doctrine of swine

Hedonistic utilitarianism says morality is all about pleasures and pains. Good = pleasure, bad = pain. And Bentham’s quantitative act utilitarianism (above) says pleasure is pleasure and so there’s nothing better or more morally valuable about pleasure gained from binge-eating fast food as you mindlessly scroll social media compared to, for example, the pleasure gained from spending time in nature with your family. If the former results in more pleasure than the latter, then it’s better and more morally valuable according to quantitative hedonism because all that matters is the quantity of pleasure.

This leads to the criticism that utilitarianism is a ‘doctrine of swine‘ in that it reduces the moral value of human life to that of pigs and animals – that there is nothing more to life than the base pleasures that can be felt by animals and that there is nothing better about a human life spent e.g. helping others and pursuing knowledge than the life of a happy pig rolling around in the mud, eating, and having sex other pigs.

“This calls up a pleasant picture of the voluptuary of the future, a bald-headed man with a number of electrodes protruding from his skill, one to give the physical pleasure of sex, one for that of eating, one for that of drinking, and so on. Now is this the sort of life that all our ethical planning should culminate in?”

– JJC Smart, Utilitarianism: For and Against

Potential response:

Mill’s qualitative hedonism (below) introduces a distinction between higher and lower pleasures, distinguishing the moral value of human life from that of ‘lower’ animals like pigs.

Mill’s qualitative hedonism

“Few human creatures would consent to be changed into any of the lower animals, for a promise of the fullest allowance of a beast’s pleasures; no intelligent human being would consent to be a fool, no instructed person would be an ignoramus, no person of feeling and conscience would be selfish and base, even though they should be persuaded that the fool, the dunce, or the rascal is better satisfied with his lot than they are with theirs… A being of higher faculties requires more to make him happy, is capable probably of more acute suffering, and certainly accessible to it at more points, than one of an inferior type; but in spite of these liabilities, he can never really wish to sink into what he feels to be a lower grade of existence… Whoever supposes that this preference takes place at a sacrifice of happiness – that the superior being, in anything like equal circumstances, is not happier than the inferior – confounds the two very different ideas, of happiness, and content. It is indisputable that the being whose capacities of enjoyment are low, has the greatest chance of having them fully satisfied; and a highly endowed being will always feel that any happiness which he can look for, as the world is constituted, is imperfect. But he can learn to bear its imperfections, if they are at all bearable; and they will not make him envy the being who is indeed unconscious of the imperfections, but only because he feels not at all the good which those imperfections qualify. It is better to be a human being dissatisfied than a pig satisfied; better to be Socrates dissatisfied than a fool satisfied. And if the fool, or the pig, are a different opinion, it is because they only know their own side of the question. The other party to the comparison knows both sides.”

– John Stuart Mill, Utilitarianism

Where Bentham’s approach with the utility calculus was purely quantitative, John Stuart Mill introduced a qualitative distinction in pleasures:

- The ‘higher pleasures’ of thought, feeling, imagination, and morality

- The ‘lower pleasures’ of the body – the pleasures that can be felt by pigs and other animals – such as eating and sex.

So where Bentham saw all pleasures as equally valuable – a purely quantitative approach – Mill argues that some pleasures are qualitatively more valuable. Mill’s justification for this increased value is that people who have experienced both higher and lower pleasures always prefer the higher pleasures – they place more value on them.

Mill distinguishes between happiness and contentment. He argues that human beings are more complex than pigs and other animals when it comes to happiness. Unlike animals, whose happiness might be satisfied by basic or ‘lower’ pleasures, humans require more than simple contentment to be truly happy.

Mill’s key point is that utilitarianism, properly understood, calls for maximising higher pleasures – those intellectual and emotional experiences unique to humans – which are qualitatively superior to the lower pleasures enjoyed by animals.

Potential response:

“[Mill] commends the idea that ‘happy’ is a partly evaluative term, in the sense that we call ‘happiness’ those kinds of satisfaction which, as things are, we approve of. But by what standard is this surplus element of approval supposed, from a utilitarian point of view, to be allocated? There is no source for it, on a strictly utilitarian view, except further degrees of satisfaction, but there are none of those available, or the problem would not arise.”

– Bernard Williams, Utilitarianism: For and Against

Williams’ response here argues that Mill’s attempt to distinguish between ‘higher’ and ‘lower’ pleasures can’t be justified within the framework of hedonistic utilitarianism itself. If utilitarianism defines value purely in terms of the amount of pleasure an experience produces, then the question then arises: why do we approve of these pleasures more than others, given that they are less pleasurable?

Introducing a further evaluative layer – calling some pleasures ‘higher’ because we approve of them – seems inconsistent. From a strictly utilitarian standpoint, the only possible ground for approval would be that the ‘higher’ pleasures generate more pleasure overall. But if these higher pleasures really were more pleasurable, we wouldn’t need to appeal to ‘higher’ quality pleasures because the issue wouldn’t arise. So, Williams argues that Mill’s higher–lower distinction smuggles in a value standard beyond mere pleasure, undermining the coherence of hedonistic utilitarianism.

Problem: Other values besides pleasure

“Suppose there were an experience machine that would give you any experience you desired. Superduper neuropsychologists could stimulate your brain so that you would think and feel you were writing a great novel, or making a friend, or reading an interesting book. All the time you would be floating in a tank, with electrodes attached to your brain. Should you plug into this machine for life, preprogramming your life’s experiences?”

– Robert Nozick, Anarchy, State, and Utopia

Robert Nozick’s experience machine thought experiment is like perfect VR: A virtual simulation that you can plug into and experience the perfect life that optimises for pleasure. But it’s a fake life. And once you’re plugged in, you don’t realise your experiences aren’t real – you think you’re living a real life.

Because the experience machine optimises for pleasure (you’d have the experience of the most pleasurable life possible), hedonistic utilitarianism implies everyone should go into the experience machine and live out their days in this fake world to maximise pleasure.

But many people would prefer not to go into the experience machine despite it being more pleasurable than real life. This suggests we place moral value on things besides pleasure, like being in contact with reality, or actually doing things rather than simply experiencing doing things. This illustrates a problem with Bentham and Mill’s hedonism: There is more to morality than just pleasure.

If you wanted to make the objection a different way, you could argue that:

- Hedonistic utilitarianism says good = maximising pleasure, bad = not maximising pleasure

- Forcing people into the experience machine against their will would maximise pleasure

- So it is morally right to force people against their will into the experience machine

- But it’s morally wrong to force people into the experience machine against their will

- So hedonistic utilitarianism is wrong.

Potential response:

Preference utilitarianism (below) rejects hedonism and says morality is about maximising preferences, not pleasures. If someone would prefer to live in the real world – even if it leads to less pleasure – preference utilitarianism says we should respect that.

Preference utilitarianism

Preference utilitarianism shifts the focus from maximising pleasure (hedonism) to maximising the satisfaction of people’s preferences. Instead of assuming that happiness is always about feeling pleasure, preference utilitarianism argues that what really matters is fulfilling what individuals want or prefer, even if those preferences don’t always lead to the greatest pleasure.

For example, some people might prefer not to plug into the experience machine (see above) because they value living a genuine life in the real world, even if it means experiencing less pleasure overall. Preference utilitarianism respects that choice, emphasising the importance of honouring people’s authentic desires rather than just their feelings of pleasure.

Another example is a monk who chooses to live an ascetic and disciplined life for religious or spiritual reasons. Preference utilitarianism would say we should support the monk’s preference for that lifestyle, even if it doesn’t maximise pleasure in the hedonistic sense. In contrast, hedonistic utilitarianism, which focuses solely on pleasure and pain, might argue that the monk should abandon his asceticism and pursue a life of indulgence and enjoyment.

By focusing on preferences rather than pleasure alone, preference utilitarianism aims to better capture the complexity of human motivations and what people truly value. It also helps address some criticisms of hedonistic utilitarianism, especially those related to cases where pleasure is not the sole or even the main goal.

Potential response:

A potential issue for preference utilitarianism is what to do in the case of conflicting preferences.

For example, a serial killer might have a preference to kill someone, while the victim obviously prefers not to be killed. So whose preference should count? With hedonistic utilitarianism, the answer might be clearer because pleasures and pains can (at least in theory) be measured using Bentham’s felicific calculus (above). The victim’s pain and loss of future happiness would almost certainly outweigh the killer’s pleasure. But preference utilitarianism doesn’t reduce preferences to a common measurable scale – it’s just one preference against another, and there’s no obvious way to decide which should take priority.

One possible solution might be a democratic approach: if most people prefer to live in a society where killing is forbidden, then that preference would take precedence. But this opens the door to the tyranny of the majority problem again: If most people would prefer to live in a society where an innocent person is tortured for the masses, would that make it morally good?

Potential response 2:

Another issue for preference utilitarianism is trivial preferences.

For example, someone might prefer to spend their whole life trying to write as small as possible. Another person might prefer to spend their life curing cancer.

If moral value is determined entirely by preferences, on what grounds can we say the latter has any more moral value than the former? 1 preference = 1 preference, so preference utilitarianism may struggle to explain how one preference could be better than another.

While there is nothing wrong with spending your life making your handwriting as small as possible, moral philosophy aims to define what’s truly good. And just because someone prefers to spend their life on something trivial that doesn’t necessarily mean it is morally valuable.

Problem: Issues re. partiality

An argument to support utilitarianism is that it’s equal and fair: Pleasure is pleasure and so no single person’s happiness can be prioritised over anyone else’s – everyone’s happiness has equal moral worth according to hedonistic utilitarianism. But this same fairness and impartiality can also become a bit of a problem if we have duties towards certain people, such as family and friends. For example:

- Let’s say you want to buy your mother a present for her birthday

- You see the perfect gift for her, and it costs $10

- But then you run Bentham’s utility calculus (above) and it turns out that donating that $10 to Joe Bloggs in Mozambique – who you’ve never met – would increase happiness more effectively

- So, (hedonistic act) utilitarianism suggests it is morally wrong to buy your mother the birthday present as this action does not maximise happiness.

And you can extend this example to basically every decision such that you’d never spend any money or even time with your family and friends. For example, the time you spent with your friends made them happy, but volunteering at the local soup kitchen would have increased the greatest good for the greatest number more effectively. So, you acted wrongly by spending time with your friends because this did not maximise the greatest happiness for the greatest number.

There are a couple of ways you can make this objection:

- The first is that living your life like this is massively impractical.

- The second is to say we have moral obligations and duties to certain people, such as family and friends. Children have duties to their mothers, fathers to daughters, friends to their friends, and so on. We might argue that it is wrong to prioritise random strangers over these people, i.e. that it is wrong to ignore these duties. And yet utilitarianism says this is what we should do. And so, the argument goes, utilitarianism is wrong because it ignores the importance of these moral duties.

Problem: Ignores intentions

We typically think a person’s intentions do have moral relevance. For example:

- Attempted murder is still a criminal offence even if the attempt causes no actual harm.

- If you trip someone up on purpose and hurt them, this act is far worse than if you accidentally tripped them – even if both actions caused the same amount of pain.

However, because utilitarianism only cares about consequences, it raises the issue that the theory ignores the moral value of a person’s intentions.

For example, imagine a bitter citizen who wants to poison his town’s water supply to kill everyone. However, he miscalculates the dose and, instead of causing harm, he just causes everyone a mild and pleasant high, which increased overall happiness that day. According to act utilitarianism, this villain’s action was morally good because it increased happiness. But this seems clearly wrong – his intention was mass murder. Many would have the intuition, though, that what this bitter citizen did was bad, regardless of the accidental outcome. This example highlights a common criticism that utilitarianism ignores intentions when judging the morality of actions.

Problem: Ignores integrity

“Jim finds himself in the central square of a small South American town. Tied up against the wall are a row of twenty Indians, most terrified, a few defiant, in front of them several armed men in uniform. A heavy man in a sweat-stained khaki shirt turns out to be the captain in charge and, after a good deal of questioning of Jim which establishes that he got there by accident while on a botanical expedition, explains that the Indians are a random group of the inhabitants who, after recent acts of protest against the government, are just about to be killed to remind other possible protestors of the advantages of not protesting. However, since Jim is an honoured visitor from another land, the captain is happy to offer him a guest’s privilege of killing one of the Indians himself. If Jim accepts, then as a special mark of the occasion, the other Indians will be let off. Of course, if Jim refuses, then there is no special occasion, and Pedro here will do what he was about to do when Jim arrived, and kill them all… What should he do?

To these dilemmas, it seems to me that utilitarianism replies… that Jim should kill the Indian. Not only does utilitarianism give these answers but, if the situations are essentially as described and there are no further special factors, it regards them, it seems to me, as obviously the right answers. But many of us would certainly wonder whether, … that could possibly be the right answer at all… A feature of utilitarianism is that it cuts out a kind of consideration which for some others makes a difference to what they feel about such cases: a consideration involving the idea, as we might first and very simply put it, that each of us is specially responsible for what he does, rather than for what other people do. This is an idea closely connected with the value of integrity.”– Bernard Williams, Utilitarianism: For and Against

Another potential issue for utilitarianism is that it ignores notions of personal integrity – that any ‘line in the sand’ you might wish to draw has to be ignored as soon as the consequences justify doing so.

This is illustrated by Bernard Williams’ Jim and the Indians example above: Jim has to choose between:

- Killing 1 innocent person to save 19 others, or

- doing nothing and letting all 20 die.

The point is not necessarily that it’s wrong to kill 1 person to save 19 others. The point is more that utilitarianism forces Jim to become a killer against his own moral convictions – that Jim is unable to have his own moral principles, such as ‘I won’t kill an innocent person’. Williams argues that utilitarianism alienates individuals from their own sense of integrity and moral identity, treating them merely as instruments for producing good consequences.

Negative utilitarianism

Where ordinary hedonism focuses on maximising pleasure, negative utilitarianism focuses primarily on reducing suffering. So, negative utilitarianism says that the most important ethical goal is to minimise pain and suffering wherever possible.

The reasoning behind this approach is that suffering is often more urgent and morally pressing than pleasure. For example, the pain of severe illness or loss can feel much more intense than the joy of a pleasant experience. Because of this, negative utilitarians argue that preventing harm should take priority over creating happiness.

Potential response:

A common objection to negative utilitarianism is the so-called ‘extreme solution’ problem: if the primary goal is to minimise suffering, then the most effective way to eliminate all suffering might be to eliminate all sentient beings – i.e. to kill everyone. From a negative utilitarian perspective, ending all life would prevent any future pain or suffering, making it the morally preferable action. But this obviously isn’t a good thing, and so negative utilitarianism is wrong.

References/further reading:

- Introduction to the Principles of Morals and Legislation by Jeremy Bentham

- Utilitarianism by John Stuart Mill

- On Liberty by John Stuart Mill

- Anarchy, State, and Utopia by Robert Nozick

- Utilitarianism: For and Against by J.J.C Smart and Bernard Williams

- Stanford page on consequentialism

See also:

- Deontological ethics

- Virtue ethics